This version (2018/01/31 11:13) is a draft.

This version (2018/01/31 11:13) is a draft.Approvals: 0/1

This is an old revision of the document!

Storage infrastructure

- Article written by Siegfried Reinwald (VSC Team) <html><br></html>(last update 2017-04-27 by sr).

Storage hardware

- Storage on VSC-3

- 9 Servers for

$HOME - 8 Servers for

$GLOBAL - 17 Servers for

$BINFS/$BINFL - ~ 800 spinning disks

- ~ 100 SSDs

Storage targets

- Several Storage Targets on VSC-3

$HOME$TMPDIR$SCRATCH$GLOBAL$BINFS$BINFL

- For different purposes

- Small Files

- Huge Files / Streaming Data

- Random I/O

Storage performance

The HOME Filesystem

- Use for non I/O intensive jobs

- Basically NFS Exports over infiniband (no RDMA)

- Targets with up to 24 Disks (RAID-6 on VSC-3)

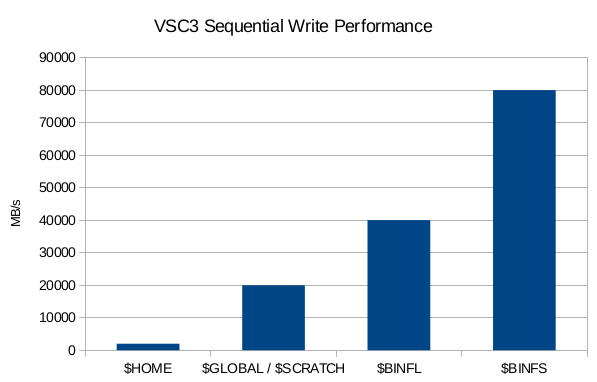

- Up to 2 Gigabyte/second write speed

- Logical volumes of projects are distributed among the servers

- Each logical volume belongs to 1 NFS server

- Accessible with the

$HOMEenvironment variable- /home/lv70XXX/username

The GLOBAL and SCRATCH filesystem

- Use for I/O intensive jobs

- ~ 500 TB Space (default quota is 500GB/project)

- Can be increased on request (subject to availability)

- BeeGFS Filesystem

- Metadata Servers

- Metadata on SSDs (RAID-1)

- 8 Metadata Targets for VSC-3

- Object Storages

- Disk Storages (RAID-6 on VSC-3)

- VSC-3: 12 Disks per Target / 4 Targets per Server / 8 Servers total

- Up to 20 Gigabyte/second write speed

- Accessible via the

$GLOBALand$SCRATCHenvironment variables$GLOBAL… /global/lv70XXX/username$SCRATCH… /scratch

The BINFL filesystem

- Specifically designed for Bioinformatics applications

- Use for I/O intensive jobs

- ~ 1 PB Space (default quota is 10GB/project)

- Can be increased on request (subject to availability)

- BeeGFS Filesystem

- Metadata Servers

- Metadata on Datacenter SSDs (RAID-10)

- 8 Metadata Servers

- Object Storages

- Disk Storages configured as RAID-6

- 12 Disks per Target / 1 Target per Server / 16 Servers total

- Up to 40 Gigabyte/second write speed

- Accessible via

$BINFLenvironment variable$BINFL… /binfl/lv70XXX/username

The BINFS filesystem

- Specifically designed for Bioinformatics applications

- Use for very I/O intensive jobs

- ~ 100 TB Space (default quota is 2GB/project)

- Can be increased on request (subject to availability)

- BeeGFS Filesystem

- Metadata Servers

- Metadata on Datacenter SSDs (RAID-10)

- 8 Metadata Servers

- Object Storages

- Datacenter SSDs are used instead of traditional disks.

- No redundancy. See it as (very) fast and low-latency scratch space. Data may be lost after a hardware failure.

- 4x Intel P3600 2TB Datacenter SSDs per Server

- 16 Storage Servers

- Up to 80 Gigabyte/second via OmniPath Interconnect

- Accessible via

$BINFSenvironment variable$BINFS… /binfs/lv70XXX/username

The TMP filesystem

- Use for

- Random I/O

- Many small files

- Size is up to 50% of main memory

- Data gets deleted after the job

- Write Results to

$HOMEor$GLOBAL

- Disadvantages

- Space is consumed from main memory <html><!–* Alternatively the mmap() system call can be used

* Keep in mind, that mmap() uses lazy loading * Very small files waste main memory (memory mapped files are aligned to page-size)–></html>

- Accessible with the

$TMPDIRenvironment variable

Storage exercises

In these exercises we try to measure the performance of the different storage targets on VSC-3. For that we will use the “ior” application (https://github.com/LLNL/ior) which is a standard benchmark for distributed storage systems.

“ior” for these exercises has been built with gcc-4.9 and openmpi-1.10.2 so load these 2 modules first:

module purge module load load gcc/4.9 openmpi/1.10.2

Now extract the storage exercises to your own Folder.

mkdir my_directory_name cd my_directory_name tar xf /home/lv70824/training/examples/08_storage_infrastructure/Storage_Exercises.tar.xz

Download Storage_Exercises

Keep in mind that the results will vary, because there are other users working on the storage targets.

Exercise 1 - Sequential I/O performance hands-On

We will now measure the sequential performance of the different storage targets on VSC-3.

<HTML><ol style=“list-style-type: lower-alpha;”></HTML> <HTML><li></HTML>With one process<HTML></li></HTML><HTML></ol></HTML>

cd 01_SequentialStorageBenchmark # Run the test-script ./run_exercise1a.sh # Wait to complete and look at the output afterwards cat Exercise_1a.result

<HTML><ol start=“2” style=“list-style-type: lower-alpha;”></HTML> <HTML><li></HTML>With 8 processes<HTML></li></HTML><HTML></ol></HTML>

./run_exercise1b.sh # Wait to complete and look at the output afterwards cat Exercise_1b.result

Take your time and compare the outputs of the 2 different runs. What can you deduce about the storage targets on VSC-3?

Exercise 1 - Sequential I/O performance discussion

Discuss the following questions with your partner:

- The performance of which storage targets improves with the number of processes? Why?

- What could you do to further improve the performance of the sequential write throughput? What could be a problem with that?

- Bonus Question:

$TMPDIRseems to scale pretty well with the number of processes although it's an in-memory filesystem. Why is that happening?

Exercise 1 - Sequential I/O performance discussion

Exercise 1a:

HOME: Max Write: 237.37 MiB/sec (248.91 MB/sec) GLOBAL: Max Write: 925.64 MiB/sec (970.60 MB/sec) BINFL: Max Write: 1859.69 MiB/sec (1950.03 MB/sec) BINFS: Max Write: 1065.61 MiB/sec (1117.37 MB/sec) TMP: Max Write: 2414.70 MiB/sec (2531.99 MB/sec)

Exercise 1b:

HOME: Max Write: 371.76 MiB/sec (389.82 MB/sec) GLOBAL: Max Write: 2195.28 MiB/sec (2301.91 MB/sec) BINFL: Max Write: 2895.24 MiB/sec (3035.88 MB/sec) BINFS: Max Write: 2950.23 MiB/sec (3093.54 MB/sec) TMP: Max Write: 16764.76 MiB/sec (17579.12 MB/sec)

Exercise 2 - Random I/O performance hands-On

We will now measure the storage performance when confronted with tiny 4kilobyte random writes.

<HTML><ol style=“list-style-type: lower-alpha;”></HTML> <HTML><li></HTML><HTML><p></HTML>With one process<HTML></p></HTML>

cd 02_RandomioStorageBenchmark # Run the Test ./run_exercise2a.sh # Wait to complete and look at the output afterwards cat Exercise_2a.result

<HTML></li></HTML> <HTML><li></HTML><HTML><p></HTML>With 8 processes<HTML></p></HTML>

./run_exercise2b.sh # Wait to complete and look at the output afterwards cat Exercise_2b.result

<HTML></li></HTML><HTML></ol></HTML>

Take your time and compare the outputs of the 2 different runs. Do additional processes speed up the process?

Now compare your Results to the sequential run in exercise 1. What can you deduce about random I/O versus sequential I/O on the VSC-3 storage targets?

Exercise 2 - Random I/O performance discussion

Discuss the following questions with your partner:

- Which storage targets on VSC-3 are especially suited for doing random I/O

- Which storage targets should never be used for random I/O

- You have a program that needs 32Gigabytes of RAM and does heavy random I/O on a 10 Gigabyte file which is stored on

$GLOBAL. How could you speed up your application? - Bonus Question: Why are SSDs so much faster than traditional disks, when it comes to random I/O? (A modern datacenter SSD can deliver ~1000 times more IOPS than a traditional disk)

Exercise 2 - Random I/O performance discussion

Exercise 2a:

HOME: Max Write: 216.06 MiB/sec (226.56 MB/sec) GLOBAL: Max Write: 56.13 MiB/sec (58.86 MB/sec) BINFL: Max Write: 42.70 MiB/sec (44.77 MB/sec) BINFS: Max Write: 41.39 MiB/sec (43.40 MB/sec) TMP: Max Write: 1428.41 MiB/sec (1497.80 MB/sec)

Exercise 2b:

HOME: Max Write: 249.11 MiB/sec (261.21 MB/sec) GLOBAL: Max Write: 235.51 MiB/sec (246.95 MB/sec) BINFL: Max Write: 414.46 MiB/sec (434.59 MB/sec) BINFS: Max Write: 431.59 MiB/sec (452.55 MB/sec) TMP: Max Write: 10551.71 MiB/sec (11064.27 MB/sec)